Why I'm Writing This

I'm documenting this for myself so I can come back and remember what's actually working right now. The industry is moving fast, and in 6-12 months I'll need to refresh on these fundamentals. This is my reference guide for the post-Andromeda era and where Meta advertising is heading.

Meta Andromeda and Gem Update

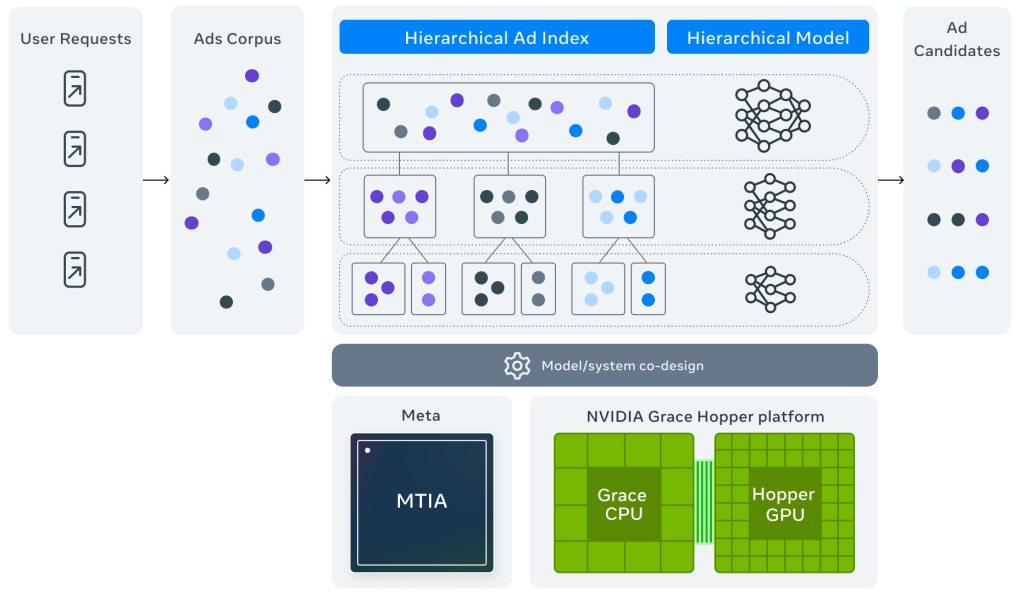

Andromeda is Meta's new AI delivery system. The Gem update followed, making the AI even more sophisticated at learning and optimization. Together, these updates represent the biggest shift in how Meta ads work since the platform started.

Here's what changed: the AI now does the targeting work. Instead of advertisers telling Meta who to show ads to, the algorithm figures it out by analyzing billions of user signals - what people click, buy, watch, search for, engage with. It builds predictive models about who will convert based on behavior patterns, not the demographic and interest boxes we used to fill in. Meta realized the future isn't about better targeting tactics, it's about better AI. So they went all-in on machine learning.

What this tells us about the future: in 12-24 months, most manual optimization will be obsolete. The advertiser's job is shifting from "targeting expert" to "creative strategist". The machine handles delivery. Humans handle creative and feeding the machine good conversion data. That's the future.

The Problem with the Old Way (And How Andromeda Solves It)

The old way looked like this:

- Separate campaigns for cold, warm, and hot traffic

- Multiple ad sets for different audience segments

- Lookalike audiences at 1%, 2%, 3%

- Interest targeting stacked on top

- Constant manual optimization - checking campaigns twice a day, adjusting budgets, killing underperformers

This created three big problems:

Problem 1 - Data Fragmentation: When conversions split across 10 different campaigns, none of them get enough data to learn properly. Campaign A gets 3 conversions, Campaign B gets 5, Campaign C gets 2. The AI can't find patterns with numbers that small. It needs volume to learn.

Problem 2 - False Precision: Creating audiences like "women 25-34 interested in fitness and wellness" feels scientific. But it's crude.

Problem 3 - Limiting the AI: When you add detailed targeting, you're not helping the algorithm - you're handicapping it. The AI might have found 50 different micro-segments that convert well, but your targeting restrictions force it to only look at your manually defined audience. You're capping its potential.

How Andromeda solves this: Everything consolidates into one learning system. All conversion data flows to one place, making the feedback loop much stronger. The AI builds dynamic micro-segments in real-time based on actual behavior, not static interest tags. And instead of constant human intervention, the system self-optimizes. Every click and conversion makes it smarter.

How Andromeda Works - Creative Diversity is Everything

Here's the core of how Andromeda operates: you load 15-20 completely different ads into one campaign. The AI shows all of them to small test groups across different user segments. This is the exploration phase.

In the first 24-48 hours, the algorithm is running thousands of micro-experiments simultaneously. Which ad works better for different age groups? Which creative resonates with video watchers vs image scrollers? Which messaging performs on weekdays vs weekends? Which ads drive higher order values?

The key insight: it's not matching ads to basic demographics. It's finding behavioral micro-segments that no human would ever define manually. The AI might discover that a specific ad angle performs incredibly well with people who engage with certain content types at specific times of day and have particular purchase patterns. Nobody would create that audience manually. The AI finds it in the data.

After 3-5 days, the algorithm shifts from exploration to exploitation. It starts heavily favoring the ad-audience combinations that showed the highest conversion probability. But it never stops exploring completely - it keeps about 10% of delivery for ongoing testing.

In practice, after a week you might see 60% of budget going to your top 5 ads, 30% to another 8 decent performers, and 10% still testing the rest. The algorithm is constantly matching specific ads to specific users based on conversion probability.

This is why creative diversity is everything now. If all your ads are just slight variations - same message, different image - the AI has nothing to work with. It needs genuinely different angles, different messages, different emotional triggers. The more diverse your creative, the more combinations the AI can test and optimize.

Creative Diversity In-Depth

Real creative diversity means variation across every dimension. Here's how to think about it:

1. Ad Angles

An angle is the fundamental approach to your message. Different angles for the same offer:

- Problem-Agitation: Focus on the pain. Make it specific and visceral. "Spending 3 hours a day in pointless meetings? That's 15 hours a week gone."

- Solution-Benefit: Lead with what it does and why it matters. "A system that actually works the way you think, not how some guru says you should."

- Transformation: Paint the before and after. "From working until 10pm every night to finishing by 5pm and having your evenings back."

- Social Proof: Use the crowd for credibility. "Why 50,000 people switched from [competitor] to this."

- Authority: Lead with credentials. "Built by people who shipped products at [respected companies]."

- Contrarian: Challenge the standard approach. "Stop doing [common thing]. It doesn't work. Here's what does."

- Urgency: Create real time pressure. "Price increases Friday" or "Lifetime deal ends Monday."

Each angle attracts different people at different stages with different psychological triggers.

2. Personas

Not demographics - psychological profiles. The same offer speaks differently to different life situations:

- The overwhelmed person juggling too much

- The skeptic who's tried everything before

- The early adopter who wants to be first

- The budget-conscious buyer focused on value

- The perfectionist who needs it to be right

- The burnt-out person at breaking point

Each persona needs different messaging, different proof points, different emotional resonance. An ad that works for the overwhelmed parent completely misses the skeptical researcher.

3. Stages of Buyer Awareness

This is from Eugene Schwartz's framework. Most advertisers only make ads for stages 4 and 5, then wonder why they can't scale.

- Stage 1 - Unaware: They don't know they have a problem. Your ad creates problem awareness. "You're wasting 2 hours daily on [specific thing]. That's 14 hours weekly."

- Stage 2 - Problem Aware: They feel the pain but don't know solutions exist. "Tired of [specific frustration]? You're not broken. The system is."

- Stage 3 - Solution Aware: They're actively looking for solutions but don't know about yours. "Looking for [solution type] that doesn't [common frustration]?"

- Stage 4 - Product Aware: They know about you but aren't convinced. This is where you show proof, handle objections, demonstrate differentiation.

- Stage 5 - Most Aware: They're ready, just need a reason to act now. "Lifetime deal ends Friday. After that, it's monthly subscription only."

The algorithm will automatically show different ads to the same person as they move through these stages. But only if you have ads for each stage.

4. Consumer Behavior Psychology

Jobs-To-Be-Done: People don't buy products. They hire them to do jobs in their life:

- Functional job (the practical outcome)

- Emotional job (how they want to feel)

- Social job (how others perceive them)

- Identity job (who they want to become)

Different ads should speak to different jobs.

Loss Aversion: People are 2x more motivated to avoid loss than gain equivalent value. "Stop losing 15 hours weekly" typically outperforms "Gain 15 hours weekly" even though it's the same claim. But different people respond to different frames, which is why you test both.

Pain-Dream-Fix: Every purchase has three parts:

- Pain (what's wrong now)

- Dream (what life looks like when it's solved)

- Fix (how to bridge the gap)

Most ads skip straight to Fix. Strong ads establish Pain and Dream first because that's where emotion lives.

Objection Handling: Every purchase has resistance. Address the most relevant objection for each persona:

- "Will this work for me?" → Proof and testimonials

- "Is it worth the money?" → Value demonstration

- "Is now the right time?" → Urgency or cost of waiting

- "Can I trust this?" → Credentials and social proof

- "Is it complicated?" → Simplicity and ease

5. Copywriting Fundamentals

Specificity Creates Credibility: "Lose 6-8 kilos in 30 days" is more believable than "lose weight fast." Specific numbers, timeframes, and outcomes make claims credible.

Clarity Over Cleverness: Your ad needs to be understood in 5 seconds by someone half-paying attention. If it requires thought to decode, it won't convert.

Real Language, Not Marketing Speak: People say "I'm drowning in work," not "I need to optimize my productivity." Use their exact words.

Short Sentences Work: Write like you talk. Short sentences are easy to read. They create momentum. They keep people moving through the ad.

One Thing Per Ad: Each ad should make ONE clear point. When you try to say everything, you say nothing. This ad is about speed. That ad is about simplicity. Another is about results. One thing.

Copy Length Strategy:

- 5-10 words: High awareness, clear offers

- 20-30 words: Mid awareness, simple concepts

- 50-80 words: Most situations

- 100-200 words: Complex offers, storytelling

Test all lengths. The algorithm figures out which works for which users.

Account Structure Working Now

The structure working for me is simple. Two campaigns.

Testing Campaign (20% of total budget)

Setup:

- 1 Campaign (Sales or Leads objective)

- 3 Ad Sets (ABO - Ad Set Budget Optimization)

- 2 Ads per ad set (1 image + 1 video of the same concept)

Why this works: 3 ad sets = 3 different creative concepts getting tested. Each concept tested in both image and video format because format matters. ABO ensures each concept gets equal budget so they all get fair testing. With CBO, Meta would immediately favor one and the others wouldn't get enough data.

What to test: Fundamentally different approaches. Different angles (problem vs transformation vs social proof). Different personas (different life situations). Different emotional triggers. Not minor variations.

Duration: Run for 7 days minimum. Don't touch it. After 7 days, look at which concepts got decent conversions. Winners move to scaling campaign. Losers get killed. Start testing 3 new concepts.

Budget per ad set: Enough to get meaningful data. If you need 15-20 conversions to evaluate a concept, and average CPA is ₹500, that's roughly ₹7,500-10,000 per ad set over 7 days.

Scaling Campaign (80% of total budget)

Setup:

- 1 Campaign (Sales or Leads objective)

- 1 Ad Set (CBO - Campaign Budget Optimization)

- 15-20 Winning ads from testing

Targeting: Broad. Age 18-65+, all genders, your country/region. No detailed targeting. No interests. Nothing. Let the algorithm work.

Why this works: CBO because we're not testing anymore - we're scaling proven winners. Meta automatically allocates budget to whichever ads perform best on any given day. Maybe Ad #3 crushes it Monday-Wednesday, then Ad #7 takes over Thursday. CBO handles this automatically.

All winners in one ad set means all conversion data consolidates into one learning system. Every conversion makes the algorithm smarter about the entire pool of creative.

Scaling strategy: Don't jump from small to massive overnight. Increase budget by 20-30% every few days. Let the algorithm adjust to new budget levels.

Creative refresh: Every 2-3 weeks, add new winners from testing and remove ads showing fatigue (rising CPAs, declining CTR). Keep the scaling campaign fresh.

Budget Split

20% continuously testing new concepts. 80% scaling proven winners. This keeps new creative coming while maximizing revenue from what works.

The testing campaign is your creative lab. The scaling campaign is your revenue engine. Both run simultaneously.

Note: Above structure worked for me, this might be different as per products, nature of business and all.

Why Tracking is Critical Now

This deserves emphasis: tracking quality now determines campaign success more than creative quality.

The Shift

Old world: Advertiser optimized based on Ads Manager data

New world: AI optimizes in real-time based on conversion signals

The AI makes millions of decisions per second: "Should I show this ad to this person?" It decides using a model built entirely from conversion data. If that data is wrong or incomplete, the model is wrong.

Conversions API is Not Optional

Browser tracking (Meta Pixel) is dying. iOS blocks it. Privacy browsers block it. You're capturing maybe 60% of actual conversions with pixel alone.

If only 60% of conversions are tracked, the algorithm thinks your campaigns perform 40% worse than reality. Worse, it thinks iOS users don't convert, so it stops showing them ads. Even though they DO convert.

Conversions API (CAPI) is server-side tracking. When someone converts on your backend, your server tells Meta directly. No browser. No blocking. You capture 85-95% of conversions instead of 60%.

This isn't a nice-to-have anymore. It's the difference between campaigns that scale and campaigns that don't.

What to Track

Track the full funnel, not just purchases:

- E-commerce: View Content → Add to Cart → Initiate Checkout → Purchase

- Lead Gen: Page View → Form Started → Form Submitted → Qualified Lead → Customer (For lead Gen, depending on your sales cycle and funnel architecture, you might have different states and types of events)

Each event teaches the algorithm something. "View Content" tells it this person is interested. "Add to Cart" tells it the messaging worked. "Purchase" tells it everything worked - find more people like this.

Event Match Quality

Meta scores your tracking quality. The metric is Event Match Quality Score in Events Manager.

High score (8.0+): You're passing lots of user data with each event - email, phone, name, location, external ID. Meta can match the conversion back to the user who saw the ad.

Low score (below 6.0): Missing data. Meta can't reliably match conversions to users. The feedback loop breaks.

How to improve: Pass maximum user information with every conversion event. When someone purchases and you have their email and phone, send that data with the event (properly hashed for privacy).

The Feedback Loop

With good tracking:

User sees ad → clicks → converts → event fires immediately with full data → Meta matches it back to exact user and ad → algorithm updates: "this ad works for people like this" → shows more of that ad to similar people → loop continues, getting smarter

With bad tracking:

User converts → event doesn't fire or fires incomplete → Meta can't match it properly → algorithm doesn't learn → delivery doesn't improve

Better tracking = better AI decisions = better performance. Check your Event Match Quality score weekly. This is ongoing work, not one-time setup.

Resources to Revisit

Three resources to come back to every few months:

1. Creative Strategist Course (5 Hours)

Complete course on creative strategy. How to develop angles, think like a strategist, build testing frameworks. Long but worth it.

2. Message-Market Match

Eugene Schwartz's stages of awareness plus Roy Furr's copywriting framework. How to match your message to where people are in their journey.

3. Piyush Pandey on Storytelling

How India's biggest ads were made. About creativity and storytelling. Not Facebook-specific but changes how you think about creative work.

That's the playbook. Simple structure. Creative diversity. Good tracking. Let the AI do its job.